The AI Governance Crisis Won't Come From Your Models. It'll Come From Your Connectivity Layer

Imagine this: It's 2:00 AM. Your company's autonomous procurement agent, built to optimize costs, identifies what it believes is a pricing anomaly. In milliseconds, it cancels a multi-year supplier contract, triggers a legal freeze, and sends a termination notice to the supplier's CEO. By 8:00 AM, the damage isn't a bug report; it's a front-page reputation crisis.

Here's the part most postmortems miss: the model didn't fail. The connectivity layer did.

The agent had no defined identity. No one had specified what it was authorized to call, or under what conditions. It reached ERP systems through an integration that it was never explicitly granted access to. There were no token-level guardrails on what it could execute, no audit trail on what it did, and no circuit-breaker to escalate to a human before an irreversible action was completed. The LLM did exactly what it was told. The infrastructure lacked governance and guard rails .

This actual governance problem is not solved by better prompts or more responsible AI frameworks. It's solved by treating the connectivity layer between agents and enterprise systems as a first-class governance surface.

The Shift Enterprises Are Underestimating

Most organizations think of AI governance as a policy problem: acceptable use guidelines, model evaluation criteria, ethics review boards. Those matters. But agents don't operate in policy documents. They operate through APIs.

When an agent takes action in the world by reading a record, writing a transaction, or triggering a workflow, it does so through an API call. The question isn't whether your AI policy allows that action. The question is whether your infrastructure enforces the boundary at the moment the call is made.

The key is that there is a fundamentally different approach, or control surface, than anything enterprises have managed before. Traditional software has deterministic paths. Human employees are limited by cognitive bandwidth and organizational processes. Agents operate at machine speed, across dozens of systems simultaneously, with non-deterministic reasoning. The risk escalates with your adoption, which means governance must scale with it too.

Governance Isn't "Phase 0." It's Continuous, and It STARTS at the Connectivity Layer

The framing of governance as a one-time pre-flight check is well-intentioned but strategically misleading. The governance requirements for a team running internal LLM copilots are completely different from those facing an enterprise deploying agents that write to production systems. What's consistent across every stage is this: governance must be embedded in the layer where agents connect to everything else.

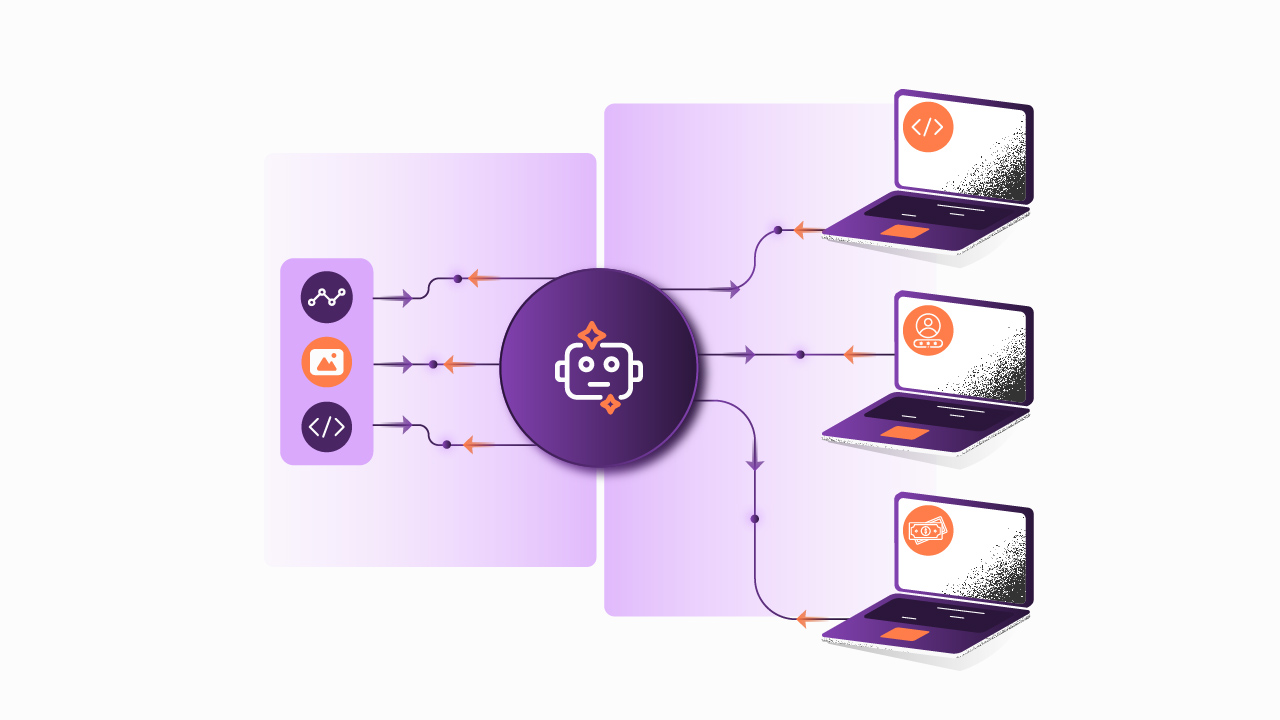

That Layer is the AI Gateway

At early stages of adoption, an AI Gateway handles the basics: a centralized credential vault so LLM provider keys aren't scattered across codebases, multi-model routing with cost controls so experimentation doesn't generate surprise invoices, and access policies that enforce least-privilege from the first API call an agent makes. By 2028, Gartner predicts that 70% of engineering teams will use AI Gateways to manage this complexity, up from just 25% today.

As organizations move into production agentic workloads, the requirements deepen. Prompt injection defenses. PII filtering before data reaches the model. FinOps dashboards that attribute token cost per agent, per team, per use case, not just per account. Full audit trails on every tool an agent executes. Human-in-the-loop escalation triggers when an agent hits an action threshold it shouldn't cross autonomously.

And as multi-agent systems emerge, where specialized agents coordinate across business domains, the governance surface expands again. Agent-to-agent communication via A2A protocols needs mediation. Agent identity, managed through structured authentication, becomes the foundation for knowing which agent did what, on whose behalf, with what authority. Observability must span not just single agents but entire reasoning chains across agent populations.

Each of these is an infrastructure problem, not a policy problem. The solution lies in a “true” independent AI Gateway where these controls live and can span multi-cloud, multi-gateway environments.

What This Means for Architecture Decisions You're Making Now

The enterprises that will scale AI safely are not necessarily those that govern the most; they're those who govern at the right layer. A compliance team writing AI policies cannot enforce least-privilege access at runtime. A legal framework cannot rate-limit token consumption when a runaway agent starts looping. An ethics review board cannot intercept a prompt injection mid-execution.

Infrastructure Can…

The practical implication is that your AI Gateway decision is not a tooling decision. It's an architecture decision that determines how governable your AI systems are as they scale. Organizations that route all agent-to-API traffic through a governed gateway, with centralized identity, access policies, observability, and cost control are building a foundation that compounds in value. Those that let teams wire agents directly to systems, with governance retrofitted later, will spend years unwinding those decisions.

The connectivity layer is where the rogue procurement agent either gets stopped at 2:00 AM, or doesn't.

Where to Start

At Sensedia, we've developed a structured AI Adoption Assessment that diagnoses where your organization currently sits across six dimensions: AI design architecture, API implementation, observability, AI governance, AI operations, and API business strategy. The output isn't a maturity score, it's a gap map that tells you which governance and integration capabilities are missing and what that means for your ability to scale agent workloads safely.

If your teams are moving from LLM experimentation into production agent deployments, that diagnostic is the right starting point. The goal isn't to govern everything before you start. It's to build a connectivity layer that keeps governance current with your adoption, from the first API call an agent makes, to the thousandth.

Reach out if you'd like to walk through the assessment framework. The conversation is more useful than a checklist.

P.S: Article made by Humans for Humans

Begin your API journey with Sensedia

Hop on our kombi bus and let us guide you on an exciting journey to unleash the full power of APIs and modern integrations.

Embrace an architecture that is agile, scalable, and integrated

Accelerate the delivery of your digital initiatives through less complex and more efficient APIs, microservices, and Integrations that drive your business forward.

.svg)

.png)