Shadow AI in Enterprises: Real Risks and How to Govern Before It Hurts

The democratization of Artificial Intelligence has brought significant productivity gains. However, it has also made room for a silent phenomenon: Shadow AI. This occurs when employees use AI tools without the knowledge or control of the organization, particularly the security team. The result can be exposure to data leaks, compliance failures, reputational damage, and other significant impacts.

CISOs, CTOs, and product leaders face the challenge of proving value with Responsible AI while Shadow AI usage spreads across organizations.

This article discusses the impact of this phenomenon, the importance of AI governance, and alternatives to minimize risks, while highlighting aspects of AI governance relevant to this context.

Why Dealing with Shadow AI Matters

The number of AI tools continues to grow. Even the daily browsers we use now integrate AI features capable of performing tasks semi-autonomously. Recent launches of solutions like OpenAI Atlas and Perplexity Comet can be useful and are gaining more users, but they pose challenges to AI governance.

In 2024, a study by the National Cybersecurity Alliance in partnership with CybSafe revealed that 38% of employees admitted to sharing sensitive information with AI tools without their employer's knowledge—a percentage that reaches 46% among Gen Z and 43% among Millennials.

Recent data leaks reinforce the cost of uncontrolled data exposure. In 2024, AT&T reported that call and SMS records of "nearly all" its customers were exposed—a reminder that telemetry and metadata are valuable and sensitive.

Beyond the operational impact, Shadow AI increases the cost of breaches. Without adequate and auditable security and privacy controls, fines can be even higher if the company cannot demonstrate the implementation of best protection practices in compliance with laws like LGPD and GDPR. In short: weak governance can be very expensive.

So, how do you reduce risk without killing innovation?

As the challenge grows, it is essential to implement policies, processes, and tools. Below, we list three practical fronts:

1) Catalog of Approved Tools and Clear Policies

Define a simple AI usage policy (what is allowed/disallowed, permitted/prohibited data) and publish a catalog of approved tools, including their purposes, data scopes, responsible departments, and contacts. Make the official path easy so people don’t seek shortcuts.

2) Continuous Monitoring of AI Usage

- Discovery: Create an inventory of calls to AI services (Firewalls and AI Gateways/proxies help).

- Observability: Monitor prompts, data origins, and destinations.

- Alerts: Trigger notifications for uploads of PII (Personally Identifiable Information), secrets, or volumes outside of policy.

- Periodic Audits: Review integrations, keys, and permissions.

Useful Indicators:

- Percentage of active users on approved vs. unapproved tools.

- Incidents prevented by DLP (PII/secret blocking).

- Approval time for new AI integrations.

- Training coverage (and quick retention checks).

3) Continuous Training

Promote recurring training with short, practical content. The Annual Cybersecurity Attitudes and Behaviors Report 2024–2025 indicates that most evaluated professionals have yet to receive adequate training to handle AI risks. Therefore, it is vital to promote ongoing education regarding security risks in AI usage.

What Not to Do

- Ban everything: Total blocks push usage off the radar, which increases Shadow AI. Instead, implement discovery and monitoring mechanisms to identify unauthorized use and address the root cause (through training, procurement, or developing internal alternatives).

- Keep logs in the dark: Without observability of prompts and data, there is no way to audit or respond to incidents. Ensure all AI usage is auditable, with logs that support both preventative analysis and investigation.

- Train once and "call it done": The landscape changes fast. A continuous program is mandatory.

I found unauthorized use, now what?

It is almost certain that, in any organization, people are using various AIs—some without authorization. Upon detecting Shadow AI, four paths are possible, each with its trade-off:

- Build internal AI agents: Replace Shadow AI use if the value justifies it.

- Pros: Control and customization; adherence to policies and audits.

- Cons: Higher development and maintenance costs.

- Contract SaaS services: To replace Shadow AI.

- Pros: Faster deployment; SLA, NDA, DPA, and other clauses that strengthen governance.

- Cons: Residual third-party risk and dependency on the supply chain.

- Authorize/Contract the identified AI: When there is proven value.

- If the benefits outweigh internal alternatives, formalize the procurement and place it under corporate governance.

- Block and prohibit use: When the risk is high.

- For services without adequate controls, block access and clearly communicate the reason for the block publicly.

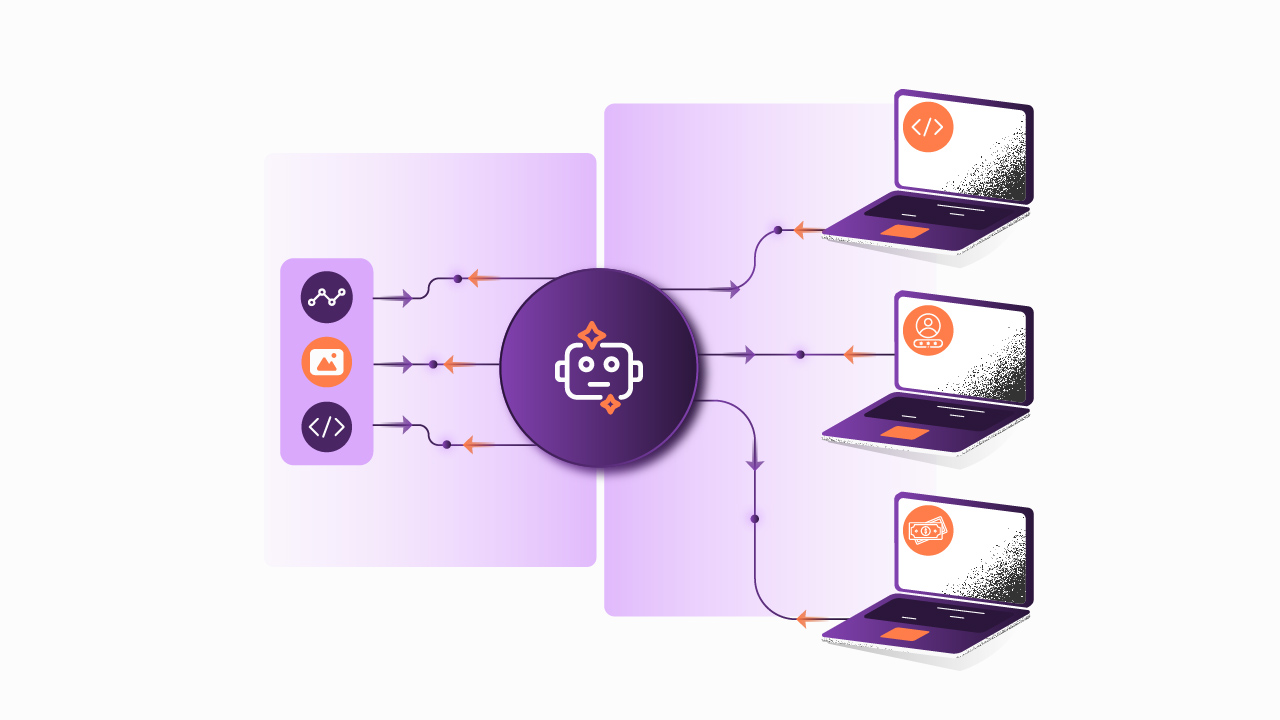

The Role of the AI Gateway

We refer to AI Gateway as the class of solutions that includes Agentic Gateways, LLM Gateways, and the like. These components centralize AI traffic, offering, from a security perspective:

- Prompt telemetry, audit logs, access control, and guardrails.

- Standardization of keys and endpoints from multiple providers under a single plane.

- Observability and corporate policies applicable to internal agents and SaaS services.

Remember: Shadow AI usually does not pass through the gateway precisely because it is outside of governance. Still, even internal agents may include tools, libraries, or models that escaped security review. Hence the importance of:

- Connecting the AI/Agentic Gateway to the SIEM.

- Generating alerts for PII violations (LGPD/GDPR) and other policies.

- Establishing periodic audits and improvement cycles led by the security team.

Related Content: Why does your company need an AI Gateway?

Best Practices for Using an AI Gateway

- Require mandatory passage of all AI traffic through the gateway.

- Apply least privilege.

- Configure DLP, content safety, rate limiting, and other security guardrails via the AI Gateway.

- Maintain immutable logs and metrics (blocked prompts, usage/token volume, costs per agent).

Conclusion

In practice, Shadow AI is a governance deficit that grows in proportion to the pressure for productivity and innovation. When IT and Security offer easy and safe paths, combined with monitoring, education, and executive sponsorship, innovation flourishes within the rules.

Treating AI governance as a strategic capability, rather than just compliance, is essential to boosting productivity while avoiding the cost of leaks.

Click here to learn how Sensedia can help your company in AI adoption!

Begin your API journey with Sensedia

Hop on our kombi bus and let us guide you on an exciting journey to unleash the full power of APIs and modern integrations.

Embrace an architecture that is agile, scalable, and integrated

Accelerate the delivery of your digital initiatives through less complex and more efficient APIs, microservices, and Integrations that drive your business forward.

.svg)

.png)